Below are my summaries and reflections as reading the text The Systematic Design of Instruction

Reflections on Chapters 1 and 2 of The Systematic Design of Instruction

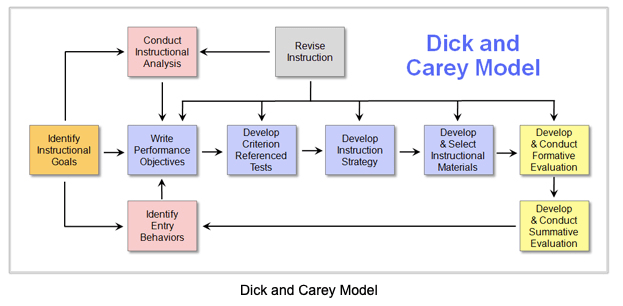

As I began reading Chapter 1 of the text “Introduction to Instructional Design” I was immediately hit by the classification of learning as a “system”, which seems like a such a sterile description of the lively and frustrating job of educating teenagers day in and day out. Luckily, the authors recognize this as they state “instructional systems include the human component and are therefore complex and dynamic, requiring constant monitoring and adjustment” (Dick, Carey, Carey, 2015, p. 2). As a math teacher, I am drawn to problem solving, and I see the value in having a more formalized approach to instruction through the ID model. The 10 components are aspects I already consider when planning instruction, though perhaps not as intentionally as needed. A current struggle I have with instructional design is how to reach my room full of very diverse students – the authors state instruction “can be designed … for use on multiple occasions with multiple learners” (Dick et al, 2015, p. 9) and I wonder how true that is in a classroom setting. I have found even if I teach the same subject lesson to two different class periods, the performance and reception can vary wildly based upon the make up of the students. Even within the same class, some students have different needs than others, requiring a huge degree of differentiation. However, learning about this method of design will help me to broaden my abilities to promote student success, so I am looking forward to working through the process.

Chapter 2 of the text, “Identifying Instructional Goals Using Front-End Analysis” gives details on the first component of ID and has given me the goal to move past my content outline approach to instruction towards a more performance technology/performance improvement model. As teachers, particularly in the current political climate, the focus on the almighty standards gives us tunnel vision. While poor student performance on assessments may just require new or additional instruction, there are also a myriad of other influences that may help us to solve problems. Even if the issue is mainly instructional, a needs assessment can help focus my efforts. The authors define the needs assessment as an equation, which warms this math teacher heart: Desired status – Actual status = Need (Dick et al, 2015, p. 23). The longer I have taught I have realized it is dangerous for me to assume prior knowledge of a topic, or what gaps and misconceptions the students may have. Including pre-tests for units is a way I have been performing needs analysis without even knowing it had a name. This process does allow me to focus in on specific needs of a group and avoid unnecessary instruction – which teenagers are NOT a fan of and will let you know it!

Further in Chapter 2, the criteria for establishing instructional goals is laid out and I found it would be a very helpful process for me to delve into with regard to the standards. I have found several of the high school math standards to have some so-called fuzzy language (ie “the student will understand quadratic functions – without defining what it means to understand). The authors provide a basic checklist of what a complete goal statement looks like, consisting of a description of the following: the learners, what the learners will be able to do in the performance context, the performance context in which the skills will be applied, the tools that will be available to the learners in the performance context (Dick et al, 2015, p. 27). I plan to, with my PLC, begin to look at the content standards through this lens so we can more adequately plan how to approach teaching them. The authors admit “it is also difficult to predict how long learners will take to master the instructional goals” (Dick et al, 2015, p. 28) so even when planning instructional goals it is acknowledged that the physical lessons will prove harder to plan. I can hope to depend upon my previous experience teaching to help me determine a rough outline of what I expect.

Overall, the first two chapters have provided an insight into the instructional design process and its first step of identifying instructional goals. While it is admittedly a much more rigid and in-depth process than I normally take to planning, I am cautiously optimistic that it will prove a valuable experience that I can transfer to my classroom.

References:

Dick, W., Carey, L., & Carey, J. O. (2015). The systematic design of instruction (8th ed.). Upper Saddle River, NJ: Pearson.

Reflections on Chapter 3 of The Systematic Design of Instruction

The topic of this chapter is conducting a goal analysis. Only after the goal has been identified can the goal analysis can take place. As the authors state, this requires the designer to as “What exactly would the learners be doing if they were accomplishing this goal successfully?” (Dick, Carey, Carey, 2015, p. 42). This is an important distinction for myself as an educator to make, as the focus is not on describing the actions of the instructor, but instead the actions of the learner. Because I am so used to planning from an educator viewpoint, shifting to the learner’s viewpoint may be more of a problem (specifically as I am a “subject matter expert”). Furthermore, when describing the specific steps “the statement of each step must include a verb that describes an observable behavior” (Dick et al, 2015, p. 47). Observable behavior means the steps of the goal must be able to be seen, not just inferred – which harkens back to the fuzzy language idea in goal setting. In my own classroom, it would be a mistake to state a step of my goal as “understanding when to use the quadratic formula”, as “understanding” is not an observable behavior. I would need to use something observable such as “correctly substitutes the values for a, b, and c into the quadratic formula”.

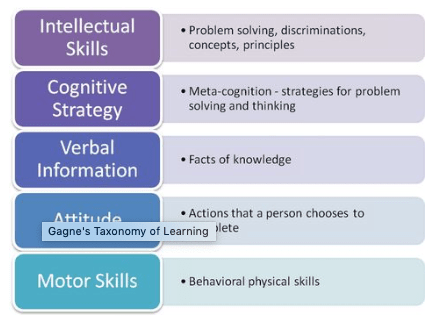

The first step of the goal analysis is determining what domain of learning the goal falls in to according to Gagné’s scheme (verbal information, intellectual skills, psychomotor skills, attitudes, and/or cognitive strategies). As a math teacher, I feel most goals for my students would fall under the domains of intellectual skills and cognitive strategies.

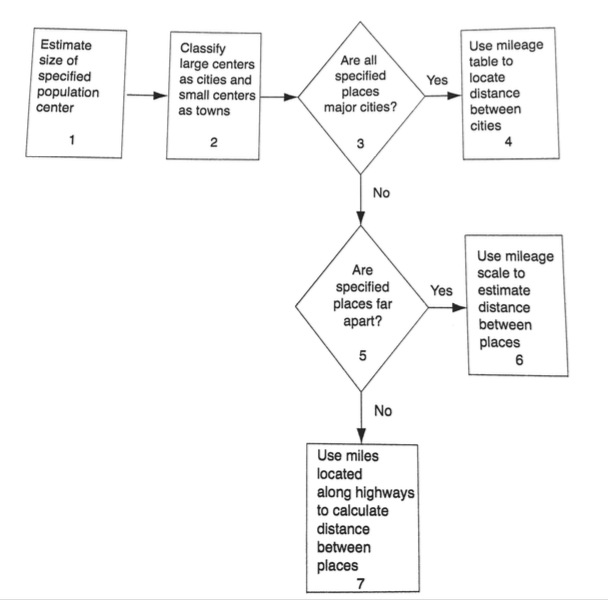

After determining the domains of learning, appropriate steps to achieve the goal can be identified. As I predict most of my goals are intellectual skills, the means the observable steps learners would take, along with possible decisions that may be made (in a “yes/no” flowchart form. The order of the steps would be denoted by arrows (see example from text below).

As I begin to work on my project for the course, I look forward to working through the process. The authors stress that the clarity of the final draft is often lacking at first, and they give examples of typical problems to look for such as unneeded steps, too small or too large steps, or misplaced steps. (Dick et al, 2015, p. 56). Recognizing that I am an ID novice, I expect that my first approach will not be perfect, but I look forward to continuing with the process and see how it shapes up.

References:

Dick, W., Carey, L., & Carey, J. O. (2015). The systematic design of instruction (8th ed.). Upper Saddle River, NJ: Pearson.

Reflections on Chapter 4 of The Systematic Design of Instruction

This chapter deals with the second part of the constructing instructional analysis step, namely identifying subordinate and entry skills needed to achieve our goals. This seems to be a rather tricky step as it is easy for me, as a subject matter expert, to assume my students will possess certain prior knowledge that they may actually be lacking, or to assume they need instruction in a skill they already possess. The authors provide a few questions that can help guide the process, citing Gagné’s “What must the student already know so that, with a minimal amount of instruction, this task can be learned?” and “What is it that the student must already know how to do, the absence of which would make it impossible to learn this subordinate skill?” (Dick, Carey, Carey, 2015, p. 62). I actually feel comfortable with this approach, as many topics in math are based on basic procedural knowledge and linearity. I am used to “unpacking” the standards to determine objectives for my learners. I definitely see myself using the hierarchical analysis structure. Already, I am beginning to see how interconnected the analysis of learners will be to this step, as every year a new group of students would present differences in the subordinate and entry level skills needed based upon their prior learnings. In this case, possible pre-tests can be utilized as mentioned by the authors (Dick et al, 2015, p. 74) but that does depend on feasibility. For me, teaching on a block schedule so I only have one semester with my students means times is always an issue.

I was happy to see the authors address one of my biggest frustrations as a classroom teacher: hugely varied ability levels in the same class. As the authors state, “it is often found that only some of the intended learners have the entry skills. What accommodations can be made for this situation … there are usually no easy answers to this all-too-common situation” (Dick et al, 2015, p. 77). It is very difficult to decide how to handle instruction when some students have mastered all the prior skills, some students have only mastered a few, and others have mastered none of the prior skills. With a limited time frame and 29 other students in the room, it is difficult to make sure every individual learner gets what they need. I think perhaps ID will be good for planning hypothetical instruction but will need much revision once the students are introduced into the system.

References:

Dick, W., Carey, L., & Carey, J. O. (2015). The systematic design of instruction (8th ed.). Upper Saddle River, NJ: Pearson.

PROJECT UPDATE

As part of this course, we will be dealing with an authentic instructional unit and applying the Dick and Carey ID model. I was planning to do my project on a traditional unit taught in Algebra 2 on radicals. I chose this due to the fact that learners often struggle with the unit, so I thought a more analytical approach to instruction might help me to design a more efficient instructional model. However … I am now considering instead doing my project on a professional development (PD) session I will be leading for other math teachers at my school in January. So many classmates in this course have mentioned poor PD sessions they attended (as I did too!) so I thought maybe if I took the ID approach now before the PD session I could have a clearer vision and plan for what I want to make sure the session attendees can do. Maybe my session won’t end up a typical boring PD session! It is also interesting because this wouldn’t be strictly intellectual skills (as my Algebra 2 unit would be) but also include some attitudinal skills. I like a challenge! I have still not made my final decision but will within the next couple of days and start working on my draft.

Reflections on Chapters 5 & 6 of The Systematic Design of Instruction

In these chapters the authors described the next steps in the ID process, analyzing learners and contexts (Chapter 5) and writing performance objectives (Chapter 6). As a current public school teacher, I felt familiar with both of these processes as I began reading. As a high school math teacher, much of the material I teach is “silo-ed”, as in presented out of context – procedural or conceptual skills in isolation. The authors statement “Of equal importance at this point in the design process are analyses. of the context in which learning occurs and the context in which the learners use their newly acquired skills” (Dick, Carey, Carey, 2015, p. 96) resonated with me. The hope is that students can transfer their learning to authentic, real world tasks. Unfortunately, this is artificial in the classroom, which often leads to a loss of student motivation (Dick et al, 2015, p. 103).

When going through the process of analyzing learners, I find I have often focused on the first aspect, the general characteristics of the learners themselves. As teaching is a “people business”, relationship building is an important step. While I use surveys, past test data, etc, for me the intellectual skills cannot be separated from the whole person-the student sitting in front of me. I have had students before who scored highly on MAPs testing and then failed my course because they just had no motivation. So while I believe in the power of learning things about your students beforehand, it is important to continue the process during instruction. The final aspects included analyzing the performance and learning contexts. Unfortunately for my students, the performance context is usually just a math classroom, or other classroom for testing, rather than a real-world authentic setting. This is the disconnect currently happening in SC schools that graduate students who seem to have little to no workplace skills. It will continue to be an issue in the standardized test driven educational system. Since I am a school teacher, the learning context (classroom) is pretty much identical to the current performance context. So, I do think that makes things comfortable for the student, though perhaps not realistic in the future when they will have to learn in one place and perform their tasks in another place.

Chapter 6 delves into writing performance objectives. At my current school, we teachers are required to write our daily objectives on the board, which even the authors reference has “a slight but significant advantage for students who are informed” (Dick et al, 2015, p. 118). The goal is, in simplest terms, “a segment of what students will be able to do in the performance context” (Dick et al, 2015, p. 119). This means in a public school setting, where the learning context and performance context are similar, basically that a student will learn the objectives and then perform well on the assessment. Without objectives, the learning could veer off track. In my classroom our objectives are derived from the state, using the SC math standards for our particular courses. What is interesting that there are some standard that use the vague verbs like “understand”, “make sense of”, etc. that Gagné warns against. Perhaps an ID expert can help them revise the standards in the future!

This chapter breaks down the performance objective into three parts, the condition, behavior, and criteria. One should begin with the behavior (skill/concepts) , and then add in the condition (available tools/resources) and criteria (acceptable performance of skill). (Dick et al, 2015, p. 132). For me as a classroom teacher, the standard would represent the behavior (generally intellectual skill). The condition would be in my classroom with whatever time limit, with whatever tools (such as a calculator, laptop, ruler, etc). The criteria would vary, depending on the skill. If a procedural skill, it would be easy to set the criterion as just arriving at the correct answer using algebraic skills. If the skill was more involved, like analyzing a problem that has many methods, a rubric that includes a description of an acceptable response would be a better method. In my classroom I grade both ways depending on the task at hand. I feel like this chapter helped me to realize that the standards themselves are only part of the story, and the conditions and criteria are equally important when transforming basic standards into full performance objectives.

References:

Dick, W., Carey, L., & Carey, J. O. (2015). The systematic design of instruction (8th ed.). Upper Saddle River, NJ: Pearson.

Reflections on Chapters 7 & 8 of The Systematic Design of Instruction

Chapter 7 delves into a thorny topic these days, developing assessment instruments. As a current public school teacher, I feel like I have been exposed to any and every type of test out there – criterion and/or norm referenced. It does make sense that the assessment should be developed after the performance objectives have been chosen, as we can make sure to align the items appropriately and can then backwards design instruction towards the assessment criteria. I this case I am thinking of the posttest, which “should assess all the objectives, especially focusing on the terminal objective” (Dick, Carey, Carey, 2015, p. 140). In reality, the authors mention we should also consider the need for any entry skills tests, pretests, and/or practice tests before the posttest. After deciding the type of test, the design process begins. This, of course, depends on the skills from our objectives (verbal, intellectual, motor, etc). For example, the authors mention verbal information domain can be assessed in formats such as short answer or multiple choice, while intellectual skills domain may require something more complex like the creation of a project or a performance (Carey et al, 2015, p. 141). In my classroom I would say many of my posttests include a variety of item types including short answer, multiple choice, essay, and occasionally product development. These varied types help me to assess my desired mathematical behaviors including solving, justifying, discriminating, constructing, and generating. When using projects (product development type items) I have had to create a rubric for both guiding learners and assessing the final outcome, which the authors speak to as a series of steps (Carey et al, 2015, p. 149). I was, however, most interested in the section concerning portfolio assessments. I have just this year included portfolio assessments into my Trigonometry classes. Students collect artifacts from class and place into a digital portfolio. They are also required to reflect on their products and the learning process. They evaluate themselves based upon the rubric provided. The authors state “portfolio assessment is not appropriate for all instruction because it is very time consuming and expensive” (Carey et al, 2015, p. 154) however I have found it to be a valuable measure for my students, many of whom have anxiety and “unfriendly” feelings towards math. A main idea from the chapter is to design with knowledge of your learners, the instructional and performance contexts, and clarity of materials.

Chapter 8 moves into the planning instructional strategies portion of the ID process. Now that we have written the objectives and assessment items, the micro and macro strategies can be developed. The authors define an instructional strategy as “the general components of a set of instructional materials and the procedures used with those materials to enable student mastery of learning outcomes.” (Carey et al, 2015, p. 174). Or, as most teacher would call it, lesson and unit planning. The authors use Gagné’s events of instruction to organize instruction: preinstructional activities, content presentation, learner participation, assessment, and follow-through activities. (Carey et al, 2015, p. 175). For me personally, I find preinstructional activities can be the most difficult. This involves tapping into the students’ motivation. While I always do my best at this, the fact is some students are not interested in the content, and some content in my area (math) is not easily applicable to the real world/authentic tasks (ex – dividing imaginary numbers). Also, the authors quote Keller’s work in motivation and the importance of “satisfaction”, both intrinsic and extrinsic. (Carey et al, 2015, p. 176). While I can provide extrinsic rewards, it is much harder for me as an instructor to help motivate students who have very little intrinsic motivation.

While the Carey method is a cognitive based instructional model, the authors speak to the use of constructivist strategies also at this step. As a math teacher, I have definitely used this type of teaching in my classroom, such as having small groups work on an authentic, open ended task (like problem based learning). In these cases, I am more focused on the process than the product. The authors confirm “constructive designers have been particularly effective in describing instructional strategies for learning to solve ill-defined problems”. (Carey et al, 2015, p. 195). In cases using PBL, inquiry based learning, or discovery based learning, a constructivist learning environment (CLE) can be blended into the techniques we are using. “the cognitive assumption is that the content drives the system, whereas the learner is the driving factor in constructivism” (Carey et al, 2015, p. 196). This speaks to me as my school district focuses more on personalized learning. Another aspect I appreciate about CLE is the way it can engender cognitive flexibility. I want my students to be able to use multiple approaches and see multiple representations of our content. The authors concede that using a CLE instructional strategy means “some assumptions of the Dick and Carey Model may be compromised” (Carey et al, 2015, p. 202), but as long as we have attended to the instructional goals and the needs of the learners we can effectively use a blended technique.

References:

Dick, W., Carey, L., & Carey, J. O. (2015). The systematic design of instruction (8th ed.). Upper Saddle River, NJ: Pearson.

Reflections on Chapters 9 & 10 of The Systematic Design of Instruction

Chapters 9 and 10 deal with the steps for developing an instructional strategy and then developing and selecting instructional materials. Deciding on the strategy must come first, as it takes into account not just the objectives and media, but also the learners and abstract content (Dick, Carey, Carey, 2015, p. 220). Delivery systems are varied, ranging from the traditional classroom model (one teacher with a group of students), to computer based, to self-paced, to distance ed, etc. As a public school teacher, I am somewhat bound by the traditional classroom model, although blended learning (integrating some online delivery options within) is starting to gain traction. I do find that I use student grouping frequently, which the authors mention as an instructional strategy with value, even if not directly related to the standards “…social interaction and changes in student grouping provide variety and interest value even when not specifically required in the performance context or objectives” (Dick et al, 2015, p. 224). For teenage students like mine, attending to this need for social interaction is a consideration whenever I design my instructional strategies, as it also allows me to incorporate the math process standard for communicating ideas, and fits within the South Carolina Profile of a High School Graduate (with social skills). So, I think it is important to not just focus solely on the content standards to the detriment of designing an overall learning experience.

Chapter 9 also speaks to the importance of feedback and choosing media that will allow for corrective feedback within the learning process (Dick et al, 2015, p. 227). This is something that I have been incorporating into my classroom more (through the use of www.deltamath.com) and I agree that it has been a good change, because students can immediately have feedback – something I couldn’t always provided to every individual learner in a timely fashion before. The authors also mention how the design must take into account the pre-instructional, assessment, and follow-through learnings, not just the instructional time. Assigning the objectives may require some adjustments (or as we say in the education business, we may need to “monitor and adjust”) but ALL of this must be down before confirming the media selection and delivery systems. Then, and only then, should we commence with planning the actual instructional materials (Dick et al, 2015, p. 246).

Chapter 10 begins with the idea of “individualized instruction’ – or personalized learning. This is the current buzzword in education as technology becomes more prevalent and students are moving away from the factory-school model towards one with personalized pathways. The authors suggest producing “self-instructional” materials when attempting ID, as it should not need any interventions from an instructor or other students. “As a first effort, however, learning components such as motivation, content, practice, and feedback should be built into the instructional materials. If you being your development with the instructor included in the instructional process, it is very easy to use the instructor as a crutch to deliver the instruction” (Dick et al, 2015, p. 252). In my own classroom teaching, when developing blended models, I feel like this is somewhat my downfall. Students are used to having me explain or model concepts, and resist when I shift the impetus for learning the material onto them. However, I do think this makes sense as a design strategy. When it comes to selections of media and delivery systems, I am currently somewhat tied by my surroundings. As a 1:1 district, I can use videos/online media when designing instruction, but the students are required by law to be physically in the building with me during instructional time … the question becomes how to best use that time while also making sure I am not using myself as a “crutch” for disseminating information.

The remainder of Chapter 10 deals with the components of instructional packages (such as materials, assessments, and management). Pre-exisitng materials, while convenient, still need to be analyzed in the context of the learners and the performance context, as there is no one-size-fits-all. A warning from the authors states “it is easy to fall into a pattern of thinking that instructional design is a strictly linear concept, but this is misleading, because tracking design and development activities reveals a sequence of overlapping and circular patterns that duplicates the iterative product design and development process”. (Dick et al, 2015, p. 263). The authors also emphasize understanding that the development represents a draft. I compare it to when I write lessons for my class, and after I teach it the first time I will make notes and revisions that will help me to change the lesson and make it work better for my learners. By the end of this stage of instructional design, there should be a “draft” of the instructional materials, assessments, and instructors manual. I feel this process is very similar to short-range (unit) planning that I do in my school.

References:

Dick, W., Carey, L., & Carey, J. O. (2015). The systematic design of instruction (8th ed.). Upper Saddle River, NJ: Pearson.

Reflections on Chapters 11, 12, & 13 of The Systematic Design of Instruction

The three final chapters of the text deal with formative evaluations, revising instructional material, and summative evaluations. A state day the authors, formative evaluation is “the process designers use to obtain data for revising their instruction to make it more efficient and effective” (Dick, Carey, Carey, 2015, p.284). In a true ID situation, the formative evaluation would go through several stages, including a one-to-one evaluation with learners (the designer working individually with some learners), to small group trials (which have a more varied group of learners to represent the true range of the target learners), to finally a field trial (in the true learning context). All along the way, the data collected from the evaluations is used to refine and improve instruction before the next part. Speaking as a classroom teacher, we usually do not have the luxury of one-to-one and small trials when designing curriculum units, and we must dive straight into field trials with our students. However, even in this situation we are able to gather data to improve instruction. I know that I have found just between first period and fourth period I can modify my instructional strategies to better reach my students – this is the basic outcome intended from formative evaluations. Also note, it seems important to understand the formative evaluations are for evaluating the instruction, not the learner!

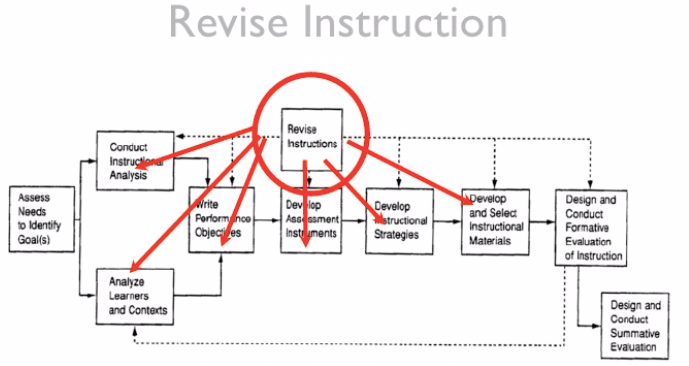

Chapter 12 delves into revising instructional materials. Because this occurs after the formative evaluation step, it involves circling back to previous parts of the ID model. And in fact, revising can touch on multiple components of the ID process like instructional strategies and materials, assessment instruments, writing objective, etc. See image below:

The revision component synthesizes all the formative evaluations to locate all the potential problems in the instruction materials (Dick et al, 2015, p.336). Using pretests and posttests, along with attitude questionnaires, gives us data we can use to plan for our revisions.

Once the instruction has been completed, the final component of the Dick and Carey Model is designing and conducting summative evaluations. Here is where all the work meets its intended purpose: Is the instruction effective in the performance context (does the instruction have the impact intended)? As the authors state, “the main purpose in summative evaluation is to determine whether given instruction meets expectations” (Dick et al, 2015, p.343). This is where as a practicing classroom teacher I am often at odds with academic learning theorists – when using pre-scripted curriculum or incorporating the “it-never-fails” new teaching method going around – how does it really work in a classroom full of learners like mine? I personally feel that this is why so many educational initiatives fail – because no real summative evaluations are completed/analyzed to see if the instruction is really working as intended for the intended learners.

Something of note is the call for “external evaluators” at this point. The evaluator of the summative evaluation is often not the designer or instructor – as they may have some emotional ties and biases that don’t allow them to be truly objective in their evaluations. I feel this is similar to a teacher putting hours into designing a new lesson, thinking it will be great, yet afterwards realizing the students do not seem to understand the material any better. The teacher, knowing all the time and effort that went into the activity, is reluctant to shelf it, and may find justifications for why it is better (even if it is not objectively so). However, I struggle to determine who would be the external evaluator in this school scenario? Most district employees evaluate test scores, but not the actual instruction that led to those test scores. Same with principals.

As I finished the text I was struck by how so many things I do as a teacher are intuitively part of the ID process. However, I do like that this book laid it out in a more discrete way with its 10 components. While I have always felt I have done a good job of analyzing learners and setting goals, I realize that I need to focus more on the instructional strategies and materials, particularly using formative and summative evaluations to revise them. I am very used to assessing the learners and assuming that is like assessing my instruction, when they are not interchangeable. While it is not feasible for me to implement all the stages of formative evaluation, I can at least incorporate more evaluation of attitudes and impact to truly see where I need to continue revising my instruction. My goal is always to improve as a teacher, and I feel having a through knowledge of ID has helped me to focus and have concrete methods to improving.